No words, just tears.

In May 1971, the parade in Liverpool was unusual. After the FA Cup final, the legendary Bill Shankly addressed over 100,000 people from the steps of St. George’s Hall in Liverpool: “Since I’ve come here to Liverpool, to Anfield, I’ve drummed it into our players, time and again, that they are privileged to play for you”. In Shankly’s view, the people who matter most in a football club are the ones who come through the turnstiles.

At the time of the parade, Shankly’s influence was so big that someone close to him remarked: “If Bill Shankly had told that crowd to storm through the Mersey tunnel and seize Birkenhead, they’d have done it”.

No tunnel was stormed that day. The parade was unusual for another reason: it didn’t follow the winning of any trophy. The day before, Liverpool had lost the FA Cup final, and the supporters showed up just to show their support for the club.

・┈┈・┈┈・┈┈・

In his role as head coach, Bill Shankly had his own office at Anfield. The other members of the coaching staff had to adapt and settle for any corner they could find. In the mid-1960s, Joe Fagan – the coach of the reserve team – suggested using a football boot storage room as a makeshift meeting space. This is how the Boot Room was born — the place where the Diamond Dogs Shankly, Paisley, Fagan, and those around them made tactical decisions seated on a few crates of Guinness and surrounded by the lingering scent of worn football boots.

In the Boot Room everybody had a voice, trusted one another and shared the same dreams. Beyond the physical space, it was a philosophy that drove Liverpool’s success in the 1970s and 1980s — built, first and foremost, on the belief that the supporters are the heart of the club.

During Liverpool’s golden era, Joe Fagan wasn’t just the organizer, he was also “the glue that held everything together”. In 1983, Joe became manager himself, taking over from Bob Paisley, the club’s most successful figure to date. In his first season on the bench, Joe achieved a historic treble — the domestic league, the FA Cup and the European Cup — a first in British football history. How did he do it? “It’s the people in the club, it’s the people that make it, really. There’s no magic!”

・┈┈・┈┈・┈┈・

Joe Fagan remains to this day the last English manager to win the most wanted European club trophy. His time as Liverpool manager lasted only two seasons. While the first ended with the historic treble, the second ended with the Heysel disaster — one of the darkest moments in the club’s history. The events in Brussels, where 39 people died, affected Joe deeply, and he retired immediately after, never managing again.

“He returned from Belgium a broken man, seen crying on the shoulder of Evans as he stepped off the plane and barely able to comprehend what he had witnessed the previous evening.”

In 2001, he was laid to rest by the Liverpool supporters, but many Evertonians joined as well. Beyond the rivalry on the pitch, the Everton fans respected the humility and professionalism of a man who spent his entire life on Merseyside. A Scouser above all else.

・┈┈・┈┈・┈┈・

It wasn’t the first time Liverpool and Everton supporters were united in grief. After the Hillsborough tragedy in 1989, a chain of scarves was formed between the two stadiums, and a tabloid that had spread false information was boycotted across the entire city. Their togetherness means that even now, more than 40 years after the disaster, it is impossible to buy The Sun anywhere in Liverpool.

The sense of solidarity has been a defining characteristic of Liverpool in the recent past. After World War II, the entire area faced significant economic decline. The geography of the region made it difficult to quickly adapt to containerized shipping — which had become essential to global trade — and, later on, the UK’s accession to the European Economic Community shifted development toward other port cities on the country’s eastern coast. Unemployment and social tensions increased, and in 1981, British Prime Minister Margaret Thatcher was advised to apply a policy of “managed decline” in Liverpool. Confronted with hardship, the Scousers learned to take care of each other and found their solace in football; as if in response, the 1980s saw the city’s two clubs dominate the English game, securing eight of the ten league titles.

With support from the European Union, the city gradually recovered: in 2004, the old docklands were listed as a UNESCO World Heritage Site, and in 2008, Liverpool was named European Capital of Culture. But football was never quite the same after the Hillsborough tragedy. The dominance of Merseyside clubs faded shortly after, and the path back to success ran parallel with the fight for justice. It was only in 2016 that an independent inquiry concluded the Hillsborough disaster was caused by institutional failures — not by the behaviour of the supporters.

・┈┈・┈┈・┈┈・

“Honestly, years later I still can’t understand how we managed to lose that game”, Kaka told reporters in 2016, when asked what happened in the 2005 Champions League final. “We had the best defenders in the world in that team: Cafu, Jaap Stam, Nesta and Maldini but we still let in three goals in six minutes. Something amazing happened that can’t be explained.”

That You’ll Never Walk Alone at halftime, with Milan leading 3–0, echoed through the stadium walls and reached the dressing rooms. “This was the fans’ best performance throughout my career” said Steven Gerrard about the most impressive comeback in the history of European cup finals.

Had Joe Fagan still been with us, he might have replied to Kaka: “It was the people that made it, really. There was no magic!”

・┈┈・┈┈・┈┈・

The 2005 final, remembered as “The Miracle of Istanbul”, was an extraordinary chapter in the club’s history. But, as Shankly himself once said, the main thing — “our bread and butter” — remained the domestic league.

And after a 30-year wait, the Premier League trophy finally arrived for Liverpool in July 2020. But in front of an empty Anfield, the emotions were nowhere near the same. Covid denied the Liverpool supporters the celebration they’d been waiting for three long decades.

Five years have passed since then. On April 27, 2025, Liverpool won the title again, this time in front of a vibrant, roaring Anfield. Peter Drury, the live commentator, did not miss the cue:

“Liverpool are the champions. For their people. With their people. Everything that 2020 did not permit, in 2025, make no mistake, this party will last all night, all week, all month, all year.”

・┈┈・┈┈・┈┈・

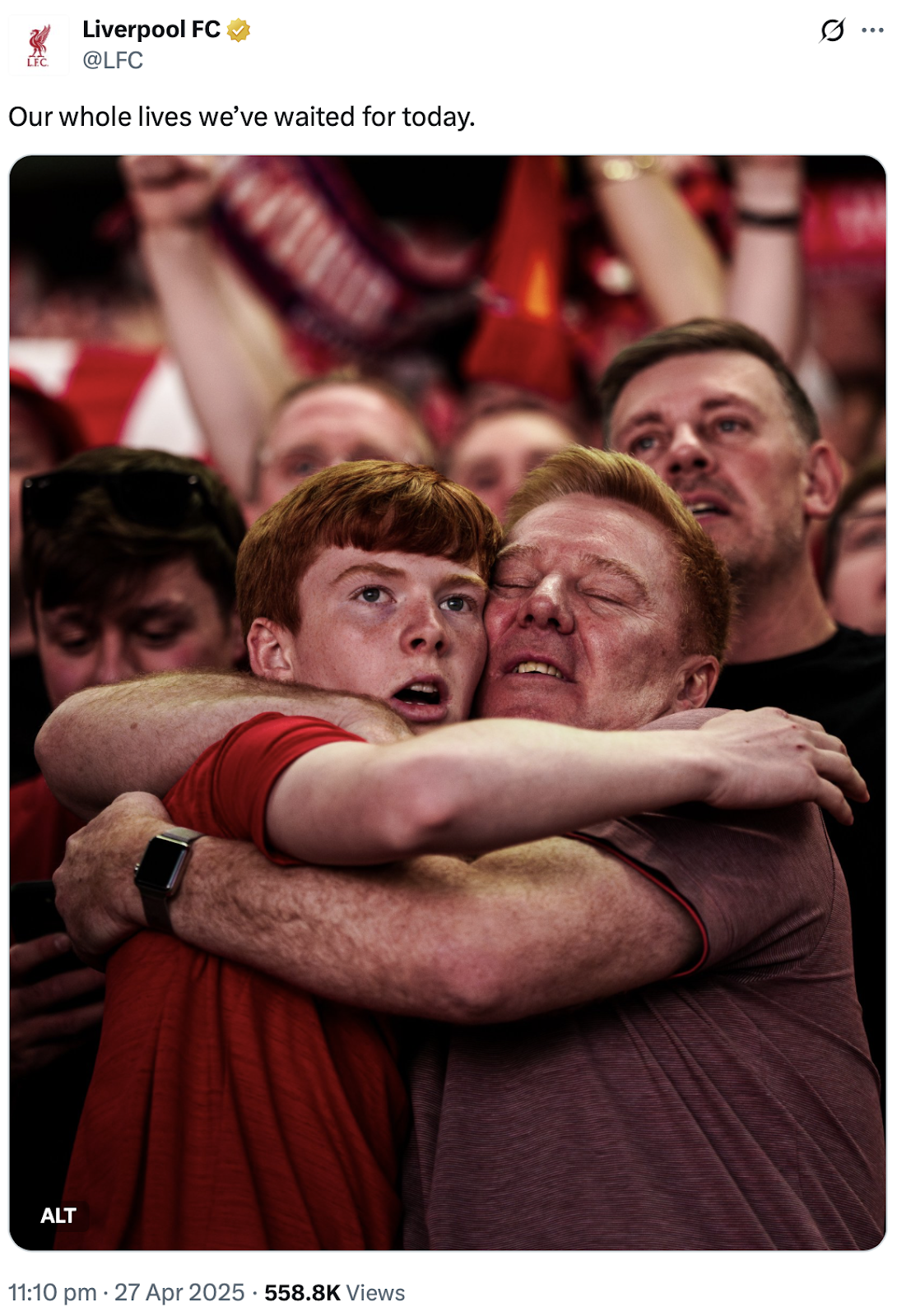

Moments later, as players and supporters chanted “You’ll Never Walk Alone” together, somewhere on Anfield, a father was hugging his son. On the father’s face you can see the relief, for finally having the chance to celebrate this moment with his son. But there is pain too: the memory of Hillsborough. Gerrard’s slip. The best friend who’s no longer there.

The young boy, in turn, looks moved by the raw emotion, almost as if waiting for confirmation that he isn’t dreaming:

A few weeks later, just before the final match of the season, the club shared a video interview with the two — Corey and Lawrence O’Connor. The interview offers a unique glimpse into the lives of people for whom going to the stadium, week after week, is an essential part of their life. “Watching football, it becomes the story of your life.”

For people like them, football is the journey through life — but the destination is more than winning the league trophy. It’s about the emotion of living these special moments together.

“Football is just the excuse; people are the reason.”

・┈┈・┈┈・┈┈・

A trophy-less parade.

Two kilometers of red and blue scarves.

A city that lives through football.

The promise of an unforgettable celebration.

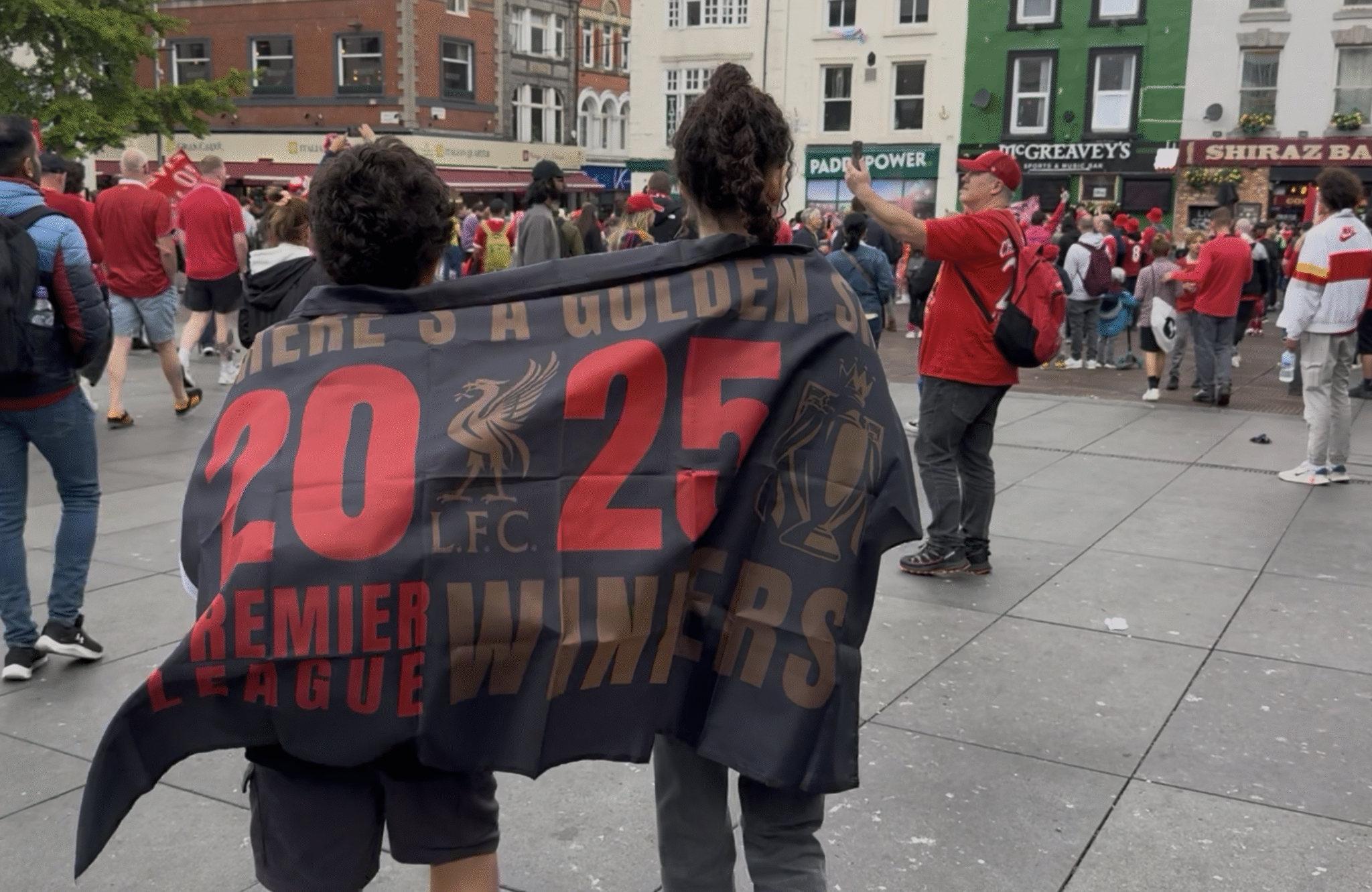

All of this convinced me that I had to be there in person, to witness the moment when, after 35 years, Liverpool supporters finally got to celebrate their most wanted trophy.

So on Monday, May 26, 2025, I arrived in Liverpool.

・┈┈・┈┈・┈┈・

First, I understood that rain can’t ruin a parade. I saw people willing to wait in the rain for more than eight hours, holding their spot just to get a good view of the parade along the main boulevard.

Then, I learned an important thing — that you never go to a parade alone: you go with the people you love. It’s one of those moments you look forward to, and when it finally happens, it’s more than you could have imagined.

Beyond football, I witnessed the solidarity of the locals: after the accident at the end of the parade, they stepped in to offer shelter, rides or internet to those in need. The scousers did what they knew best: they stood together.

The parade may or may not have passed in a few seconds, but the memories will certainly last forever.

・┈┈・┈┈・┈┈・

I don’t know how much winning the league meant to the club. But for many of the people of Liverpool, it meant everything.

Liverpool FC are Premier League champions

Written on 25 May 2025, 08:25am

My journey as a Liverpool FC supporter

Agueroooo was the moment I started paying more attention to Premier League football. I was already supporting Liverpool, so two years later, I suffered when Gerrard slipped, I witnessed Džeko’s brace from a few yards on Goodisson Park (the first Premier League game that I attended), and, another two days later, Crystanbul.

The next milestone was the arrival of Klopp, the normal one. I went to Anfield for a few European matches, I enjoyed the comeback against Borussia, and I slowly began to believe. But two lost finals later (2016 Sevilla and 2018 Ramos) I said it’s time to chill. It seemed like Liverpool won’t be successful anytime soon, in a time when football is a business and success is more about revenue and less about trophies.

So I did not see the 2019 Champions League trophy coming. I did not dare to hope, not even after that corner taken quickly. Never give up, sure, but I was still chill. I did not try to be on the stadium for the final, but winning it hit me in an unexpected and unrepeatable way.

Next season was supposed to be the one when the Premier League trophy is coming our way. And just when I was about to admit that I was transformed from doubter to believer, that Anfield game against Atletico happened, and then, the Covid stroke. Time to chill, again. The Premier League title eventually came, but winning it felt more like the moment you get a present only after you remind your loved ones that it was your birthday.

The coming seasons consolidated my theory that football remains a business first and foremost, and that the trophies are just a happy side effect. Like in the ‘Three body problem‘, the pre-Covid stable era turned almost overnight into a chaotic era: empty stadiums, broken legs, 2-7 defeats, last minute winning goals scored by the goalkeeper, dreams on quadruples and another final lost against the inevitable Madrid.

The departures of Wijnaldum, Origi, Mane and Firmino hurt, the YNWA rendition on the Santiago Bernabeu felt bittersweet, and Klopp’s departure became unavoidable (at least for me). So long, and thanks for all the fish.

And then comes Arne Slot, and with the same squad inherited from Klopp, manages to bring the Premier League title on Anfield, this time full and bouncing. A possible black swan event: historic, improbable (maybe less so for Ted Lasso), but that can, retrospectively, be explained. These explanations might come in another post; now it’s time to celebrate. If I learned something since that Aguerooo moment is that success comes and goes. So I’m enjoying this period without caring too much about the future.

Time to enjoy! 🍾

Pete Wylie – Heart as big as Liverpool